Modern devices have incredible computing power sitting idle. Since the seminal Wu paper in 2019, hardware heterogeneity has grown because modern edge devices now span a wider range of CPUs, GPUs, and NPUs.

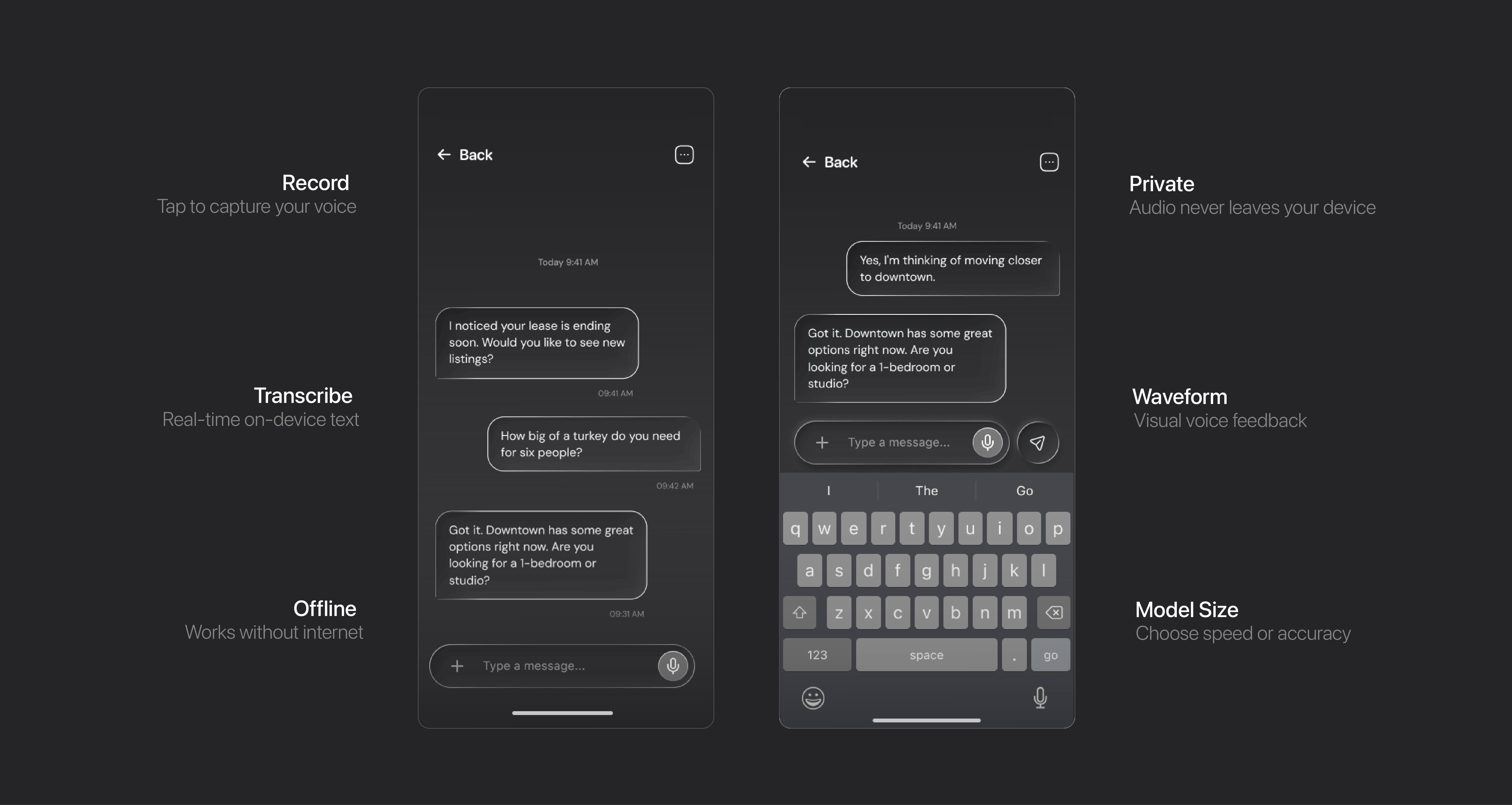

We leverage that hardware to build faster, more private, and more cost-effective AI features. Sutura builds infrastructure for running AI models on your device with no cloud dependencies, no recurring API costs, and complete control over your data and deployment.

We're building the runtime and optimization tools that let businesses deploy voice, audio, and sensor AI at scale - without the overhead of cloud infrastructure, per-request billing, or the environmental cost of massive data centers.